Optimal compression in human concept learning

Abstract

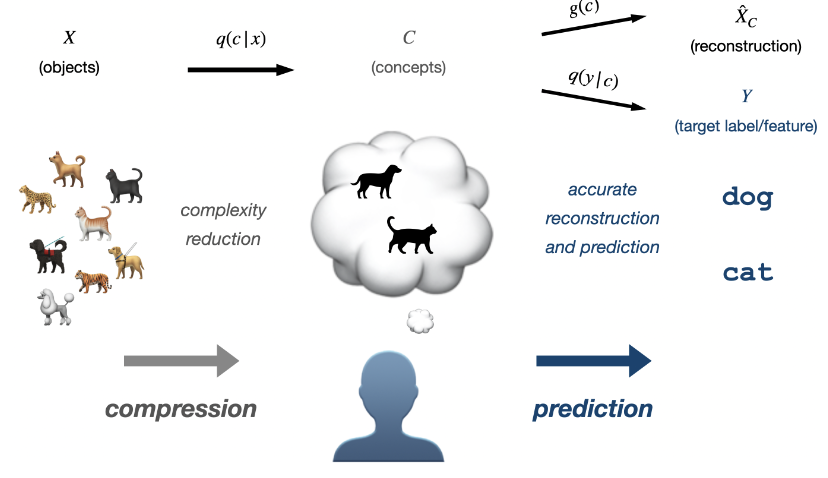

The computational principles that underlie human concept learning have been debated in the literature for decades. Here, we formalize and test a new perspective that is grounded in rate-distortion theory (RDT), the mathematical theory of optimal (lossy) data compression, which has recently been gaining increasing popularity in cognitive science. More specifically, we characterize optimal conceptual systems as solutions to a special type of RDT problem, show how these optimal systems can generalize to unseen examples, and test their predictions for human behavior in three foundational concept-learning experiments. We find converging evidence that optimal compression may account for human concept learning. Our work also lends new insight into the relation between learnability and compressibility; integrates prototype, exemplar, and Bayesian approaches to human concepts within the RDT framework; and offers a potential theoretical link between concept learning and other cognitive functions that have been successfully characterized by efficient compression.