Teasing apart models of pragmatics using optimal reference game design

Abstract

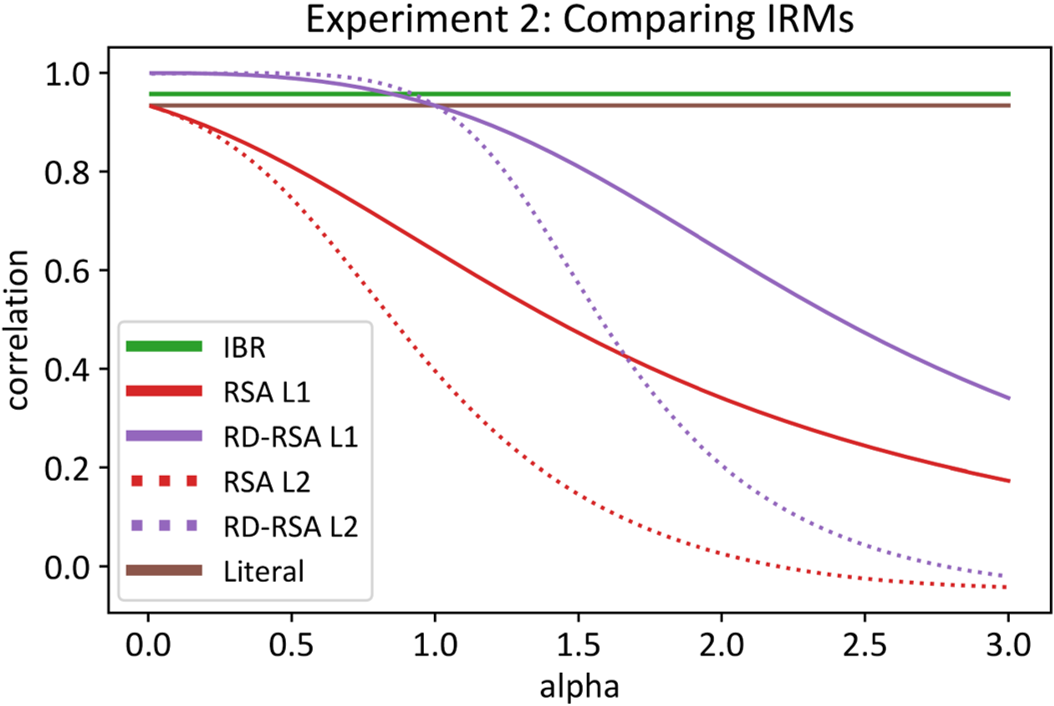

How do humans produce and comprehend language in pragmatic ways? A variety of models of pragmatic inferences have been proposed, and these models are often evaluated on their ability to account for human inferences in reference game experiments. However, these experiments are not tailored to target theoretical differences between models or clearly tease apart model predictions. We propose an optimal experiment design approach to systematically construct reference games that can optimally differentiate between models of human pragmatic reasoning. We demonstrate this approach and apply it to four models that have been debated in the literature: Grammar-based, Iterated Best Response (IBR), Rational Speech Act (RSA), and a recent variant of RSA grounded in Rate–Distortion theory (RD-RSA). Using these optimal reference game experiments, we find empirical evidence favoring iterated rationality models over the grammar-based model, as well as support for the relevance of Rate–Distortion theory to human pragmatic inferences. These results suggest that our optimal reference game design framework may help adjudicate between computational theories of pragmatic reasoning.