Toward human-like object naming in artificial neural systems

Abstract

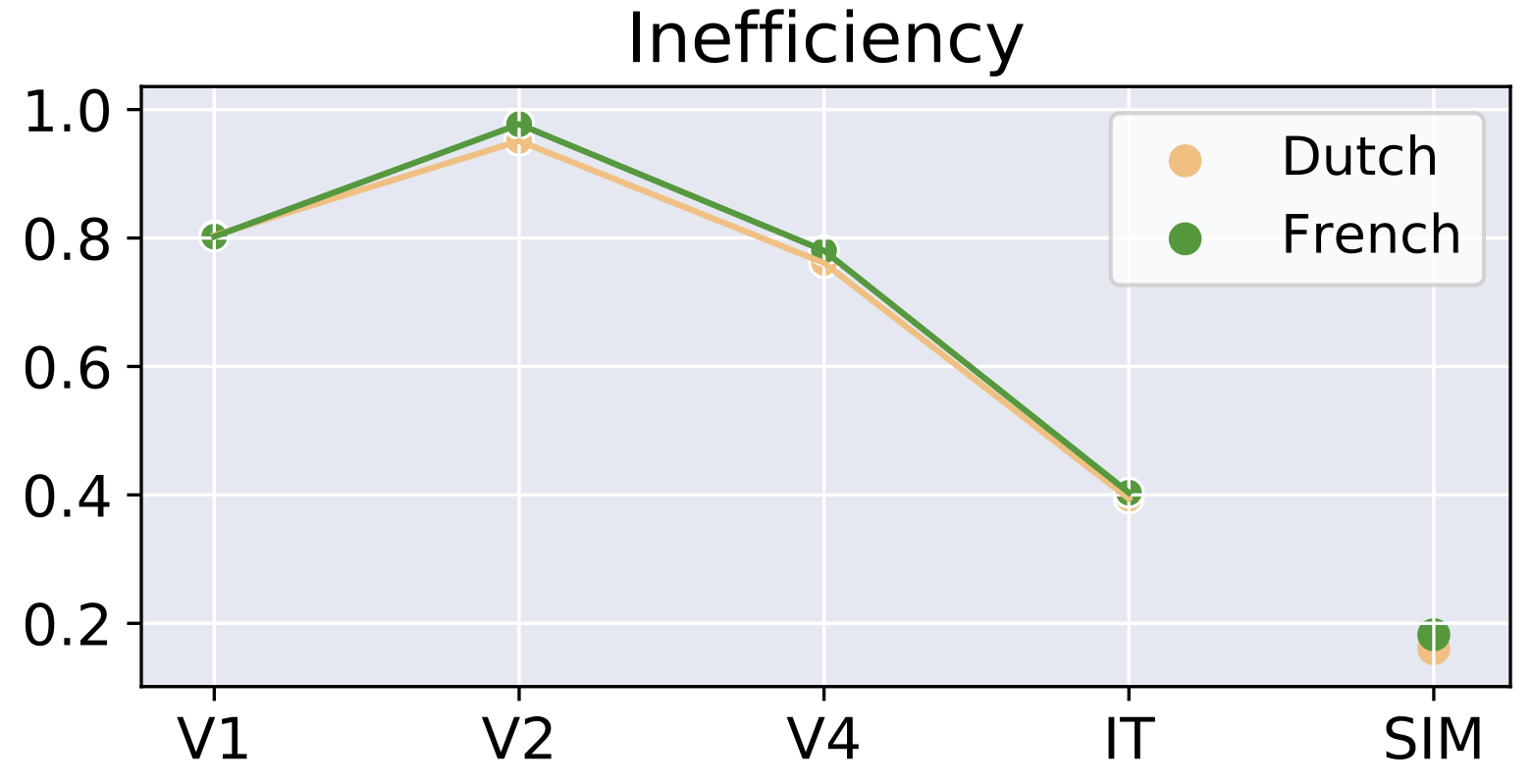

Partitioning a rich set of objects into words is a fundamental aspect of human language and a major challenge for machines. Recently, it has been argued that word meanings evolve under pressure to optimize the Information Bottleneck (IB) principle and that this framework may be used to inform AI systems with human-like semantics. However, a major challenge for invoking this approach at scale is that it assumes an underlying representation of the environment which is often unknown. Here, we address this challenge by leveraging deep learning models for specifying such underlying representations. We demonstrate our approach in the domain of containers by evaluating optimal IB container-naming systems derived from representations generated by each layer of CORnet-S, a brain-inspired deep learning image classifier. We show a gradient in success in accounting for the container naming systems of Dutch and French, where the deeper layer of CORnet-S that roughly corresponds to a high-level object recognition area in the brain outperforms shallower layers that correspond to lower-level visual processing. This suggests that our approach may be useful for testing the relevance of various types of non-linguistic representations to the emergence of word meanings, and could potentially aid in informing artificial neural agents with human-like semantics.